Quick Start

MimiSDK makes it easy to provide advanced audio personalization to your app with a Mimi Hearing ID and Mimi Sound Personalization. It provides an intuitive, highly customizable UI built on a powerful audio core to provide advanced Hearing Tests and personalized audio for your users.

We provide a comprehensive API, providing the ability to create everything from complex custom integrations to advanced sound personalization with a few lines of code.

Key Features

- Provide advanced Hearing Tests using our latest testing technologies.

- Use Mimi Sound Personalization to provide a sound experience like no other.

- Powerful, yet simplistic customization with an advanced theming engine and comprehensive open APIs.

Overview

MimiSDK is comprised of multiple frameworks that are each responsible for a distinct functional area. They enable the SDK to be flexible enough to provide simple integration while also providing powerful low-level API where required. Your exposure to each framework will depend on the level of your integration.

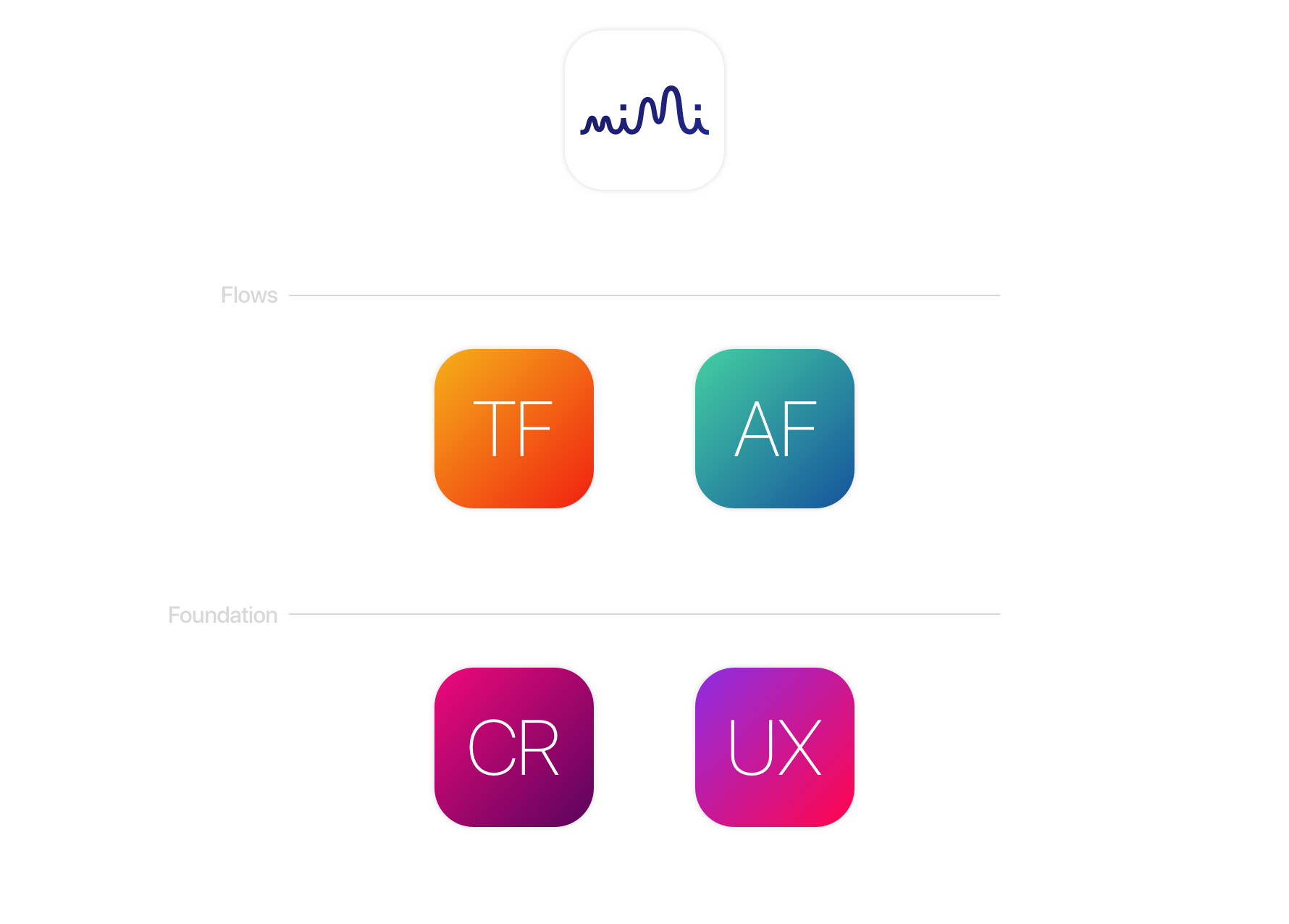

MimiSDK

The SDK acts as a figurative umbrella for all the other frameworks - providing the easiest entry point into Mimi. It contains the Profile, a series of flexible views that provide insulated access to Mimi features and functionality.

MimiTestKit

MimiTestKit contains the UI flow for taking a Mimi Hearing Test, opening the gateway to creating a Mimi Hearing ID for a user. MimiTestKit handles all aspect of the Hearing Test; from set-up, to practice, to execution and submission.

MimiAuthKit

MimiAuthKit provides the UI flow for authenticating a user with Mimi. Relatively simple, a user can use MimiAuthKit to log in to an existing Mimi account and reset their password.

MimiUXKit

MimiUXKit contains a collection of UI/UX shared components that provide the visual core to other aspects of the SDK. It consists of our custom buttons, controls, labels, checkboxes and other UIKit wizardry.

MimiCoreKit

MimiCoreKit provides the low-level APIs for interacting with Mimi services. Acting as the focal point for all data and communication; MimiCoreKit can be used to access raw data models, observe logic events and interact with raw Mimi APIs.

MimiSDK Reference

MimiSDK Reference